For most of my Kubernetes deployments, I use Longhorn for persistent storage. This allows me to have a distributed and replicated storage medium in my pods. It also has backup features, etc. and ‘just works’. However, from time to time, Longhorn’s replication functionality isn’t what you want. One such case was when I installed JellyFin. I didn’t want my DVD collection – many TB’s of data – to be replicated across my Longhorn nodes.

I looked at various options, including the very nice MinIO and S3, but concluded that my data was better suited to NFS. As you will see from other blog posts, I run TrueNAS and have many different things stored and backed up there.

Fortunately, installing a persistent volume provisioner for NFS is as easy as installing a Helm chart. In this case, I settled on NFS Subdirectory External Provisioner Helm Chart

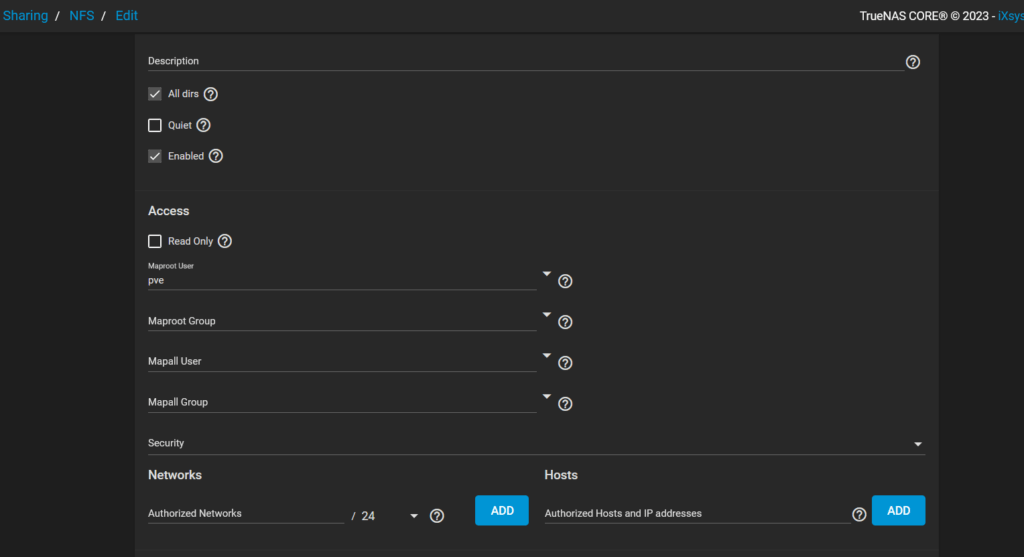

Before you can add and configure the storage provider, you need to set up and configure your NFS share. In my case, I set up a simple NFS share in nfs://192.168.1.95/mnt/HDDPool2/kubernetesa – I would add ‘b’, ‘c’ etc. as and when I needed them.

With the share in place, I tested connectivity to it from another Ubuntu machine on my network (see NFS and Proxmox containers don’t mix for the fun I had testing this out) and then went on to install the NFS provisioner system with these commands: –

helm repo add nfs-subdir-external-provisioner https://kubernetes-sigs.github.io/nfs-subdir-external-provisioner/

helm repo update

helm install nfs-subdir-external-provisioner nfs-subdir-external-provisioner/nfs-subdir-external-provisioner

--set nfs.server=192.168.1.95

--set nfs.path=/mnt/HDDPool2/kubernetesa

--set storageClass.name=nfs-client

--set storageClass.provisionerName=k8s-sigs.io/nfs-subdir-external-provisionerIt is easy enough to create multiple provisioners that point to different NFS servers and shares by re-running the above install command and specifying a different deployment name, provisioner name, storage class name, server, and NFS path.

To make use of the provisioner, you then need to use the new storageClass.className in your manifests like this: –

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: demo-nfs-claim

spec:

storageClassName: nfs-client

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1GiThen use kubectl to create the claim with the command: –

kubectl create -f pvc.yamlThis should then create the persistent volume claims, and the underlying infrastructure should create a folder within the NFS share.

For me, this did not happen. This was because, although I could mount the NFS file system in my VMs, the provisioner couldn’t. Eventually, I needed to install the NFS utils on each of my Kubernetes worker nodes. This was easy enough; I just issued the following commands on each of the worker nodes: –

apt-get update

apt install nfs-commonHowever, that still didn’t solve the problem. When I looked in the PVC logs, I could see a permissions error, and the PVC stayed in a ‘Pending’ state.

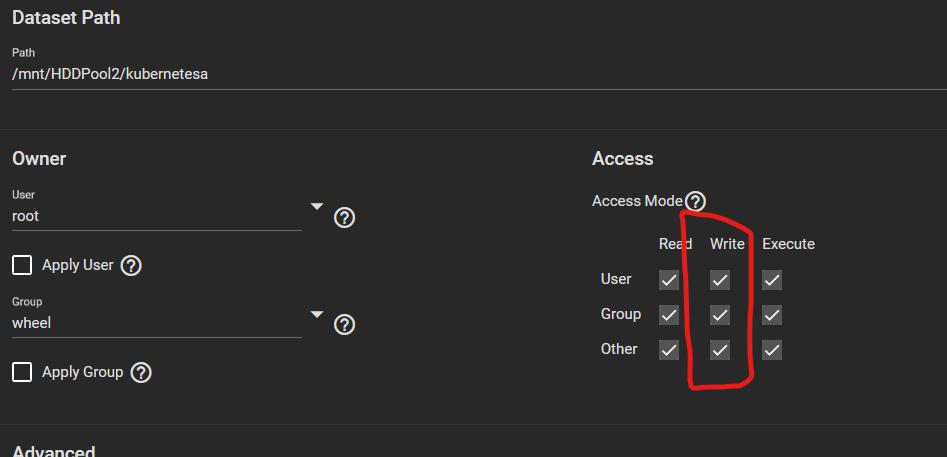

The problem was actually the Share on TrueNAS. I had to change the data set to have write permissions like this: –

After that, when I rechecked the PVC, it had been created.

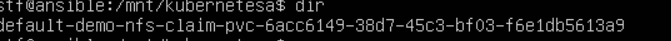

Using my Ubuntu VM, I did an ‘ls -al /mnt/HDDPool2/kubernetesa’, and it listed this: –

The provisioner had created a folder with the namespace name, claim name and a GUID on the end. However, that was not the end of the journey. Although the folder had been created, trying to create files resulted in a permissions error. It took quite a while to determine what the problem was, as pods were able to access the mount, create folders but not files.

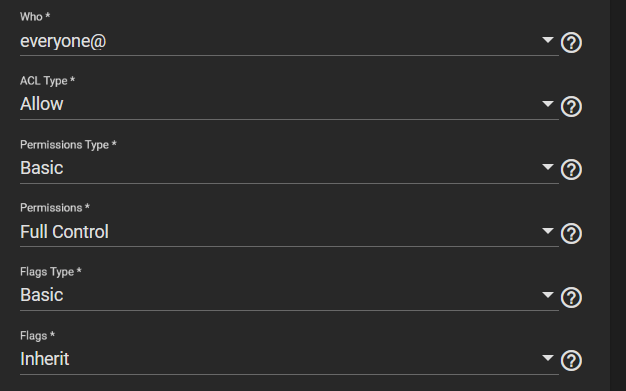

As with most of these NFS shares, it was because of the share settings in TrueNAS. After a little trial and error, I found that I needed to set the permissions on the pool and on the share like this: –

This is not secure, but it works and lets you finish setting up the persistent volume claims, etc., and you can then change the permissions to suite.

This means I can continue installing JellyFin and move my DVD collection to the persistent volume without replicating the files across my Kubernetes nodes.